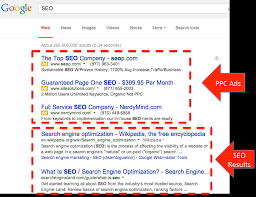

Search engine optimization (SEO) is the process of growing the quality and quantity of website traffic by increasing the visibility of a website or a web page to users of a web search engine. SEO refers to the improvement of unpaid results (known as “natural” or “organic” results) and excludes direct traffic and the purchase of paid placement. Additionally, it may target different kinds of searches, including image search, video search, academic search, news search, and industry-specific vertical search engines. Promoting a site to increase the number of backlinks, or inbound links, is another SEO tactic. By May 2015, mobile search had surpassed desktop search.

Search engine optimization (SEO) is the process of growing the quality and quantity of website traffic by increasing the visibility of a website or a web page to users of a web search engine. SEO refers to the improvement of unpaid results (known as “natural” or “organic” results) and excludes direct traffic and the purchase of paid placement. Additionally, it may target different kinds of searches, including image search, video search, academic search, news search, and industry-specific vertical search engines. Promoting a site to increase the number of backlinks, or inbound links, is another SEO tactic. By May 2015, mobile search had surpassed desktop search.

This post contains affiliate links, which mean if you use these links to purchase an item or service I receive a commission at no extra cost to you. Visit my Affiliate Disclaimer page here.

Algorithms

As an Internet marketing strategy, SEO considers how search engines work, the computer-programmed algorithms that dictate search engine behavior, what people search for, the actual search terms or keywords typed into search engines, and which search engines are preferred by their targeted audience. SEO is performed because a website will receive more visitors from a search engine when websites rank higher in the search engine results page (SERP). These visitors can then potentially be converted into customers.

As an Internet marketing strategy, SEO considers how search engines work, the computer-programmed algorithms that dictate search engine behavior, what people search for, the actual search terms or keywords typed into search engines, and which search engines are preferred by their targeted audience. SEO is performed because a website will receive more visitors from a search engine when websites rank higher in the search engine results page (SERP). These visitors can then potentially be converted into customers.

SEO differs from local search engine optimization in that the latter is focused on optimizing a business’ online presence so that its web pages will be displayed by search engines when a user enters a local search for its products or services. The former instead is more focused on national or international searches.

The History of Search Engine Optimization

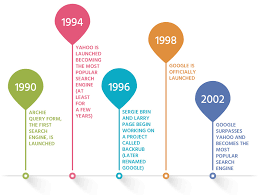

Webmasters and content providers began optimizing websites for search engines in the mid-1990s, as the first search engines were cataloging the early Web. Initially, all webmasters only needed to submit the address of a page, or URL, to the various engines which would send a web crawler to crawl that page, extract links to other pages from it, and return information found on the page to be indexed. The process involves a search engine spider downloading a page and storing it on the search engine’s own server. A second program, known as an indexer, extracts information about the page, such as the words it contains, where they are located, and any weight for specific words, as well as all links the page contains. All of this information is then placed into a scheduler for crawling at a later date.

Webmasters and content providers began optimizing websites for search engines in the mid-1990s, as the first search engines were cataloging the early Web. Initially, all webmasters only needed to submit the address of a page, or URL, to the various engines which would send a web crawler to crawl that page, extract links to other pages from it, and return information found on the page to be indexed. The process involves a search engine spider downloading a page and storing it on the search engine’s own server. A second program, known as an indexer, extracts information about the page, such as the words it contains, where they are located, and any weight for specific words, as well as all links the page contains. All of this information is then placed into a scheduler for crawling at a later date.

Black Hat and White Hat SEO practitioners

Website owners recognized the value of a high ranking and visibility in search engine results, creating an opportunity for both black hat and white hat SEO practitioners. According to industry analyst Danny Sullivan, the phrase “search engine optimization” probably came into use in 1997. Sullivan credits Bruce Clay as one of the first people to popularize the term. On May 2, 2007, Jason Gambert attempted to trademark the term SEO by convincing the Trademark Office in Arizona that SEO is a “process” involving manipulation of keywords and not a “marketing service.”

Website owners recognized the value of a high ranking and visibility in search engine results, creating an opportunity for both black hat and white hat SEO practitioners. According to industry analyst Danny Sullivan, the phrase “search engine optimization” probably came into use in 1997. Sullivan credits Bruce Clay as one of the first people to popularize the term. On May 2, 2007, Jason Gambert attempted to trademark the term SEO by convincing the Trademark Office in Arizona that SEO is a “process” involving manipulation of keywords and not a “marketing service.”

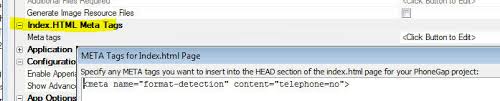

Meta Tag or Index Files

Early versions of search algorithms relied on webmaster-provided information such as the keyword meta tag or index files in engines like ALIWEB. Meta tags provide a guide to each page’s content. Using metadata to index pages was found to be less than reliable, however, because the webmaster’s choice of keywords in the meta tag could potentially be an inaccurate representation of the site’s actual content. Inaccurate, incomplete, and inconsistent data in meta tags could and did cause pages to rank for irrelevant searches. Web content providers also manipulated some attributes within the HTML source of a page in an attempt to rank well in search engines. By 1997, search engine designers recognized that webmasters were making efforts to rank well in their search engine, and that some webmasters were even manipulating their rankings in search results by stuffing pages with excessive or irrelevant keywords. Early search engines, such as Altavista and Infoseek, adjusted their algorithms to prevent webmasters from manipulating rankings.

Early versions of search algorithms relied on webmaster-provided information such as the keyword meta tag or index files in engines like ALIWEB. Meta tags provide a guide to each page’s content. Using metadata to index pages was found to be less than reliable, however, because the webmaster’s choice of keywords in the meta tag could potentially be an inaccurate representation of the site’s actual content. Inaccurate, incomplete, and inconsistent data in meta tags could and did cause pages to rank for irrelevant searches. Web content providers also manipulated some attributes within the HTML source of a page in an attempt to rank well in search engines. By 1997, search engine designers recognized that webmasters were making efforts to rank well in their search engine, and that some webmasters were even manipulating their rankings in search results by stuffing pages with excessive or irrelevant keywords. Early search engines, such as Altavista and Infoseek, adjusted their algorithms to prevent webmasters from manipulating rankings.

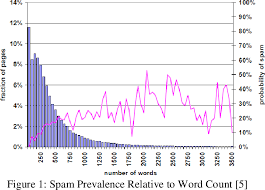

Adversarial Information Retrieval on the Web

By relying so much on factors such as keyword density which were exclusively within a webmaster’s control, early search engines suffered from abuse and ranking manipulation. To provide better results to their users, search engines had to adapt to ensure their results pages showed the most relevant search results, rather than unrelated pages stuffed with numerous keywords by unscrupulous webmasters. This meant moving away from heavy reliance on term density to a more holistic process for scoring semantic signals. Since the success and popularity of a search engine is determined by its ability to produce the most relevant results to any given search, poor quality or irrelevant search results could lead users to find other search sources. Search engines responded by developing more complex ranking algorithms, taking into account additional factors that were more difficult for webmasters to manipulate. In 2005, an annual conference, AIRWeb (Adversarial Information Retrieval on the Web), was created to bring together practitioners and researchers concerned with search engine optimization and related topics.

By relying so much on factors such as keyword density which were exclusively within a webmaster’s control, early search engines suffered from abuse and ranking manipulation. To provide better results to their users, search engines had to adapt to ensure their results pages showed the most relevant search results, rather than unrelated pages stuffed with numerous keywords by unscrupulous webmasters. This meant moving away from heavy reliance on term density to a more holistic process for scoring semantic signals. Since the success and popularity of a search engine is determined by its ability to produce the most relevant results to any given search, poor quality or irrelevant search results could lead users to find other search sources. Search engines responded by developing more complex ranking algorithms, taking into account additional factors that were more difficult for webmasters to manipulate. In 2005, an annual conference, AIRWeb (Adversarial Information Retrieval on the Web), was created to bring together practitioners and researchers concerned with search engine optimization and related topics.

Banned from the Search Results

Companies that employ overly aggressive techniques can get their client websites banned from the search results. In 2005, the Wall Street Journal reported on a company, Traffic Power, which allegedly used high-risk techniques and failed to disclose those risks to its clients. Wired magazine reported that the same company sued blogger and SEO Aaron Wall for writing about the ban. Google’s Matt Cutts later confirmed that Google did in fact ban Traffic Power and some of its clients.

Companies that employ overly aggressive techniques can get their client websites banned from the search results. In 2005, the Wall Street Journal reported on a company, Traffic Power, which allegedly used high-risk techniques and failed to disclose those risks to its clients. Wired magazine reported that the same company sued blogger and SEO Aaron Wall for writing about the ban. Google’s Matt Cutts later confirmed that Google did in fact ban Traffic Power and some of its clients.

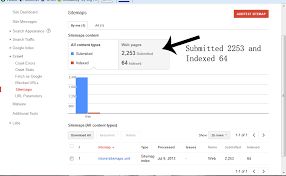

Google Sitemaps program

Some search engines have also reached out to the SEO industry, and are frequent sponsors and guests at SEO conferences, web-chats, and seminars. Major search engines provide information and guidelines to help with website optimization. Google has a Sitemaps program to help webmasters learn if Google is having any problems indexing their website and also provides data on Google traffic to the website. Bing Webmaster Tools provides a way for webmasters to submit a sitemap and web feeds, allows users to determine the “crawl rate”, and track the web pages index status.

Some search engines have also reached out to the SEO industry, and are frequent sponsors and guests at SEO conferences, web-chats, and seminars. Major search engines provide information and guidelines to help with website optimization. Google has a Sitemaps program to help webmasters learn if Google is having any problems indexing their website and also provides data on Google traffic to the website. Bing Webmaster Tools provides a way for webmasters to submit a sitemap and web feeds, allows users to determine the “crawl rate”, and track the web pages index status.

In 2015, it was reported that Google was developing and promoting mobile search as a key feature within future products. In response, many brands began to take a different approach to their Internet marketing strategies.

The Relationship Search Engine Optimization Has with Google

In 1998, two graduate students at Stanford University, Larry Page and Sergey Brin, developed “Backrub”, a search engine that relied on a mathematical algorithm to rate the prominence of web pages. The number calculated by the algorithm, Page Rank, is a function of the quantity and strength of inbound links. Page Rank estimates the likelihood that a given page will be reached by a web user who randomly surfs the web, and follows links from one page to another. In effect, this means that some links are stronger than others, as a higher Page Rank page is more likely to be reached by the random web surfer.

In 1998, two graduate students at Stanford University, Larry Page and Sergey Brin, developed “Backrub”, a search engine that relied on a mathematical algorithm to rate the prominence of web pages. The number calculated by the algorithm, Page Rank, is a function of the quantity and strength of inbound links. Page Rank estimates the likelihood that a given page will be reached by a web user who randomly surfs the web, and follows links from one page to another. In effect, this means that some links are stronger than others, as a higher Page Rank page is more likely to be reached by the random web surfer.

Page and Brin founded Google search engine in 1998

Page and Brin founded Google in 1998. Google attracted a loyal following among the growing number of Internet users, who liked its simple design. Off-page factors (such as Page Rank and hyperlink analysis) were considered as well as on-page factors (such as keyword frequency, meta tags, headings, links and site structure) to enable Google to avoid the kind of manipulation seen in search engines that only considered on-page factors for their rankings. Although Page Rank was more difficult to game, webmasters had already developed link building tools and schemes to influence the Inktomi search engine, and these methods proved similarly applicable to gaming Page Rank. Many sites focused on exchanging, buying, and selling links, often on a massive scale. Some of these schemes, or link farms, involved the creation of thousands of sites for the sole purpose of link spamming.

Page and Brin founded Google in 1998. Google attracted a loyal following among the growing number of Internet users, who liked its simple design. Off-page factors (such as Page Rank and hyperlink analysis) were considered as well as on-page factors (such as keyword frequency, meta tags, headings, links and site structure) to enable Google to avoid the kind of manipulation seen in search engines that only considered on-page factors for their rankings. Although Page Rank was more difficult to game, webmasters had already developed link building tools and schemes to influence the Inktomi search engine, and these methods proved similarly applicable to gaming Page Rank. Many sites focused on exchanging, buying, and selling links, often on a massive scale. Some of these schemes, or link farms, involved the creation of thousands of sites for the sole purpose of link spamming.

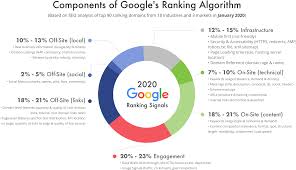

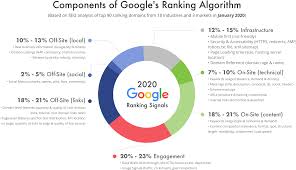

Components of Google’s Ranking Algorithm

By 2004, search engines had incorporated a wide range of undisclosed factors in their ranking algorithms to reduce the impact of link manipulation. In June 2007, The New York Times’ Saul Hansell stated Google ranks sites using more than 200 different signals. The leading search engines, Google, Bing, and Yahoo, do not disclose the algorithms they use to rank pages. Some SEO practitioners have studied different approaches to search engine optimization, and have shared their personal opinions. Patents related to search engines can provide information to better understand search engines. In 2005, Google began personalizing search results for each user. Depending on their history of previous searches, Google crafted results for logged in users.

By 2004, search engines had incorporated a wide range of undisclosed factors in their ranking algorithms to reduce the impact of link manipulation. In June 2007, The New York Times’ Saul Hansell stated Google ranks sites using more than 200 different signals. The leading search engines, Google, Bing, and Yahoo, do not disclose the algorithms they use to rank pages. Some SEO practitioners have studied different approaches to search engine optimization, and have shared their personal opinions. Patents related to search engines can provide information to better understand search engines. In 2005, Google began personalizing search results for each user. Depending on their history of previous searches, Google crafted results for logged in users.

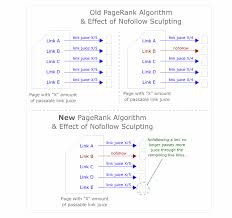

Page Rank sculpting

In 2007, Google announced a campaign against paid links that transfer Page Rank. On June 15, 2009, Google disclosed that they had taken measures to mitigate the effects of Page Rank sculpting by use of the no-follow attribute on links. Matt Cutts, a well-known software engineer at Google, announced that Google Bot would no longer treat any no-follow links, in the same way, to prevent SEO service providers from using no-follow for Page Rank sculpting. As a result of this change the usage of no-follow led to evaporation of Page Rank. In order to avoid the above, SEO engineers developed alternative techniques that replace no-followed tags with obfuscated JavaScript and thus permit Page Rank sculpting. Additionally several solutions have been suggested that include the usage of iframes, Flash and JavaScript.

In 2007, Google announced a campaign against paid links that transfer Page Rank. On June 15, 2009, Google disclosed that they had taken measures to mitigate the effects of Page Rank sculpting by use of the no-follow attribute on links. Matt Cutts, a well-known software engineer at Google, announced that Google Bot would no longer treat any no-follow links, in the same way, to prevent SEO service providers from using no-follow for Page Rank sculpting. As a result of this change the usage of no-follow led to evaporation of Page Rank. In order to avoid the above, SEO engineers developed alternative techniques that replace no-followed tags with obfuscated JavaScript and thus permit Page Rank sculpting. Additionally several solutions have been suggested that include the usage of iframes, Flash and JavaScript.

Google Caffeine

In December 2009, Google announced it would be using the web search history of all its users in order to populate search results. On June 8, 2010 a new web indexing system called Google Caffeine was announced. Designed to allow users to find news results, forum posts and other content much sooner after publishing than before, Google Caffeine was a change to the way Google updated its index in order to make things show up quicker on Google than before. According to Carrie Grimes, the software engineer who announced Caffeine for Google, “Caffeine provides 50 percent fresher results for web searches than our last index…” Google Instant, real-time-search, was introduced in late 2010 in an attempt to make search results more timely and relevant. Historically site administrators have spent months or even years optimizing a website to increase search rankings. With the growth in popularity of social media sites and blogs the leading engines made changes to their algorithms to allow fresh content to rank quickly within the search results.

In December 2009, Google announced it would be using the web search history of all its users in order to populate search results. On June 8, 2010 a new web indexing system called Google Caffeine was announced. Designed to allow users to find news results, forum posts and other content much sooner after publishing than before, Google Caffeine was a change to the way Google updated its index in order to make things show up quicker on Google than before. According to Carrie Grimes, the software engineer who announced Caffeine for Google, “Caffeine provides 50 percent fresher results for web searches than our last index…” Google Instant, real-time-search, was introduced in late 2010 in an attempt to make search results more timely and relevant. Historically site administrators have spent months or even years optimizing a website to increase search rankings. With the growth in popularity of social media sites and blogs the leading engines made changes to their algorithms to allow fresh content to rank quickly within the search results.

Google Hummingbird

In February 2011, Google announced the Panda update, which penalizes websites containing content duplicated from other websites and sources. Historically websites have copied content from one another and benefited in search engine rankings by engaging in this practice. However, Google implemented a new system which punishes sites whose content is not unique. The 2012 Google Penguin attempted to penalize websites that used manipulative techniques to improve their rankings on the search engine. Although Google Penguin has been presented as an algorithm aimed at fighting web spam, it really focuses on spammy links by gauging the quality of the sites the links are coming from. The 2013 Google Hummingbird update featured an algorithm change designed to improve Google’s natural language processing and semantic understanding of web pages. Hummingbird’s language processing system falls under the newly recognized term of “conversational search” where the system pays more attention to each word in the query in order to better match the pages to the meaning of the query rather than a few words. With regards to the changes made to search engine optimization, for content publishers and writers, Hummingbird is intended to resolve issues by getting rid of irrelevant content and spam, allowing Google to produce high-quality content and rely on them to be ‘trusted’ authors.

In February 2011, Google announced the Panda update, which penalizes websites containing content duplicated from other websites and sources. Historically websites have copied content from one another and benefited in search engine rankings by engaging in this practice. However, Google implemented a new system which punishes sites whose content is not unique. The 2012 Google Penguin attempted to penalize websites that used manipulative techniques to improve their rankings on the search engine. Although Google Penguin has been presented as an algorithm aimed at fighting web spam, it really focuses on spammy links by gauging the quality of the sites the links are coming from. The 2013 Google Hummingbird update featured an algorithm change designed to improve Google’s natural language processing and semantic understanding of web pages. Hummingbird’s language processing system falls under the newly recognized term of “conversational search” where the system pays more attention to each word in the query in order to better match the pages to the meaning of the query rather than a few words. With regards to the changes made to search engine optimization, for content publishers and writers, Hummingbird is intended to resolve issues by getting rid of irrelevant content and spam, allowing Google to produce high-quality content and rely on them to be ‘trusted’ authors.

In October 2019, Google announced they would start applying BERT models for English language search queries in the US. Bidirectional Encoder Representations from Transformers (BERT) was another attempt by Google to improve their natural language processing but this time in order to better understand the search queries of their users. In terms of search engine optimization, BERT intended to connect users more easily to relevant content and increase the quality of traffic coming to websites that are ranking in the Search Engine Results Page.

In October 2019, Google announced they would start applying BERT models for English language search queries in the US. Bidirectional Encoder Representations from Transformers (BERT) was another attempt by Google to improve their natural language processing but this time in order to better understand the search queries of their users. In terms of search engine optimization, BERT intended to connect users more easily to relevant content and increase the quality of traffic coming to websites that are ranking in the Search Engine Results Page.

I hope that you have really enjoyed this post,

Please Leave All Comments in the Comment Box Below ↓

Hey pal,

Thanks for this amazing review on Search engine optimization SEO, I have read your review for three times just to get the best concept out of it. If I may say, you did it with perfection and nailed everything from history to now and that is not something all people do.

I really appreciate your hard work for sharing!

Hello,

Thank you for considering Search Engine Optimization an encouraging article that you enjoyed reading so much, that you read it three times. It pleases me that you find this article to be very helpful and informative.

You are most certainly welcome for the hard work and sharing of this page.

Best Of Blessings To You My Friend!

Hi Jerry,

Wow, this is an extremely detailed and informative post on Search Engine Optimization and also where it all started and the role it plays. Many of the components that you mention, I had never heard of, so great to discover it here.

It is good to see the difference between Black Hat SEO and White Hat SEO, terms that I have come across before, but didn’t really know the difference between them, apart from keyword stuffing.

Relevant and quality content is certainly very important to Google and other search engines.

Hello,

Thank you for considering Search Engine Optimization a very detailed and informative post on Search Engine Optimization.

We are in a technology era and we need to stay up to date with the constant changes the internet has to offer by providing the most relevant and quality content available in order to remain a part of this marketing strategy.

All The Best To You My Friend!

Hi Jerry,

This is the best article on SEO that I have read ever. My sincere compliments. You have traced the history of SEO and arrived at where it stands today. It’s relationship with Google is beautifully brought out too. I hope u keep putting out such blog-posts in the future too. This has been great learning. Google caffeine and Google hummingbird too have been explained beautifully. U are indeed an expert in your niche.

Thank u.

Aparna

Welcome,

Thank you for committing a portion of your time to read, comment, and considering this post to be useful. I really appreciate your remarks and it definitely pleases me to learn that this is considered interesting information to you.

Blessings And Unlimited Success To You My Friend!

Hello there,

I have just been introduced to an online means of making money, and seeing what search engines can do to boost your sites makes it really important, and I would be really happy to get started with my blog, so I can make use of it to better my business as we go on.

Thanks for sharing!

Good day, it pleases me to reply to your comment,

I also agree that seeing what search engines can do to boost your sites makes it really important, and it depends largely on hard work and dedication after that point to determine the outcome of our success.

Wishing you all the best!

Thank you very much for offering search engine optimization and a choice topic to tell us about.

Not so many people can really teach us about a topics like this one and I’m happy to learn about it. I just started my own website and I wanted to learn about how to get my post to a much larger audience and you have spoken very well on the topic.

Thank you very much for this good explanation.

Good day,

I am so glad that you find this article suitable for you.

GOD Bless!