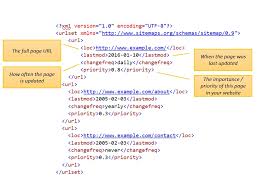

The leading search engines, such as Google, Bing and Yahoo!, use crawlers to find pages for their algorithmic search results. Pages that are linked from other search engine indexed pages do not need to be submitted because they are found automatically. The Yahoo! Directory and DMOZ, two major directories which closed in 2014 and 2017 respectively, both required manual submission and human editorial review. Google offers Google Search Console, for which an XML Sitemap feed can be created and submitted for free to ensure that all pages are found, especially pages that are not discoverable by automatically following links in addition to their URL submission console. Yahoo! formerly operated a paid submission service that guaranteed crawling for a cost per click; however, this practice was discontinued in 2009.

The leading search engines, such as Google, Bing and Yahoo!, use crawlers to find pages for their algorithmic search results. Pages that are linked from other search engine indexed pages do not need to be submitted because they are found automatically. The Yahoo! Directory and DMOZ, two major directories which closed in 2014 and 2017 respectively, both required manual submission and human editorial review. Google offers Google Search Console, for which an XML Sitemap feed can be created and submitted for free to ensure that all pages are found, especially pages that are not discoverable by automatically following links in addition to their URL submission console. Yahoo! formerly operated a paid submission service that guaranteed crawling for a cost per click; however, this practice was discontinued in 2009.

This post contains affiliate links, which mean if you use these links to purchase an item or service I receive a commission at no extra cost to you. Visit my Affiliate Disclaimer page here.

Search engine crawlers may look at a number of different factors when crawling a site. Not every page is indexed by the search engines. The distance of pages from the root directory of a site may also be a factor in whether or not pages get crawled.

The latest version of Chromium

Today, most people are searching on Google using a mobile device. In November 2016, Google announced a major change to the way crawling websites and started to make their index mobile-first, which means the mobile version of a given website becomes the starting point for what Google includes in their index. In May 2019, Google updated the rendering engine of their crawler to be the latest version of Chromium (74 at the time of the announcement). Google indicated that they would regularly update the Chromium rendering engine to the latest version. In December 2019, Google began updating the User-Agent string of their crawler to reflect the latest Chrome version used by their rendering service. The delay was to allow webmasters time to update their code that responded to particular bot User-Agent strings. Google ran evaluations and felt confident the impact would be minor.

Today, most people are searching on Google using a mobile device. In November 2016, Google announced a major change to the way crawling websites and started to make their index mobile-first, which means the mobile version of a given website becomes the starting point for what Google includes in their index. In May 2019, Google updated the rendering engine of their crawler to be the latest version of Chromium (74 at the time of the announcement). Google indicated that they would regularly update the Chromium rendering engine to the latest version. In December 2019, Google began updating the User-Agent string of their crawler to reflect the latest Chrome version used by their rendering service. The delay was to allow webmasters time to update their code that responded to particular bot User-Agent strings. Google ran evaluations and felt confident the impact would be minor.

Avoiding Undesirable Content

To avoid undesirable content in the search indexes, webmasters can instruct spiders not to crawl certain files or directories through the standard robots.txt file in the root directory of the domain. Additionally, a page can be explicitly excluded from a search engine’s database by using a meta tag specific to robots (usually <meta name=”robots” content=”noindex”> ). When a search engine visits a site, the robots.txt located in the root directory is the first file crawled. The robots.txt file is then parsed and will instruct the robot as to which pages are not to be crawled. As a search engine crawler may keep a cached copy of this file, it may on occasion crawl pages a webmaster does not wish crawled. Pages typically prevented from being crawled include login specific pages such as shopping carts and user-specific content such as search results from internal searches. In March 2007, Google warned webmasters that they should prevent indexing of internal search results because those pages are considered search spam.

To avoid undesirable content in the search indexes, webmasters can instruct spiders not to crawl certain files or directories through the standard robots.txt file in the root directory of the domain. Additionally, a page can be explicitly excluded from a search engine’s database by using a meta tag specific to robots (usually <meta name=”robots” content=”noindex”> ). When a search engine visits a site, the robots.txt located in the root directory is the first file crawled. The robots.txt file is then parsed and will instruct the robot as to which pages are not to be crawled. As a search engine crawler may keep a cached copy of this file, it may on occasion crawl pages a webmaster does not wish crawled. Pages typically prevented from being crawled include login specific pages such as shopping carts and user-specific content such as search results from internal searches. In March 2007, Google warned webmasters that they should prevent indexing of internal search results because those pages are considered search spam.

Increasing The Prominence of A Web-page

A variety of methods can increase the prominence of a web-page within the search results. Cross linking between pages of the same website to provide more links to important pages may improve its visibility. Writing content that includes frequently searched keyword phrase, so as to be relevant to a wide variety of search queries will tend to increase traffic. Updating content so as to keep search engines crawling back frequently can give additional weight to a site. Adding relevant keywords to a web page’s metadata, including the title tag and meta description, will tend to improve the relevancy of a site’s search listings, thus increasing traffic. URL canonicalization of web pages accessible via multiple URLs, using the canonical link element or via 301 redirects can help make sure links to different versions of the URL all count towards the page’s link popularity score.

A variety of methods can increase the prominence of a web-page within the search results. Cross linking between pages of the same website to provide more links to important pages may improve its visibility. Writing content that includes frequently searched keyword phrase, so as to be relevant to a wide variety of search queries will tend to increase traffic. Updating content so as to keep search engines crawling back frequently can give additional weight to a site. Adding relevant keywords to a web page’s metadata, including the title tag and meta description, will tend to improve the relevancy of a site’s search listings, thus increasing traffic. URL canonicalization of web pages accessible via multiple URLs, using the canonical link element or via 301 redirects can help make sure links to different versions of the URL all count towards the page’s link popularity score.

Two Broad SEO Technique Categories

SEO techniques can be classified into two broad categories: techniques that search engine companies recommend as part of good design (“white hat”), and those techniques of which search engines do not approve (“black hat”). The search engines attempt to minimize the effect of the latter, among them spamdexing. Industry commentators have classified these methods, and the practitioners who employ them, as either white hat SEO, or black hat SEO. White hats tend to produce results that last a long time, whereas black hats anticipate that their sites may eventually be banned either temporarily or permanently once the search engines discover what they are doing.

SEO techniques can be classified into two broad categories: techniques that search engine companies recommend as part of good design (“white hat”), and those techniques of which search engines do not approve (“black hat”). The search engines attempt to minimize the effect of the latter, among them spamdexing. Industry commentators have classified these methods, and the practitioners who employ them, as either white hat SEO, or black hat SEO. White hats tend to produce results that last a long time, whereas black hats anticipate that their sites may eventually be banned either temporarily or permanently once the search engines discover what they are doing.

White hat SEO

An SEO technique is considered white hat if it conforms to the search engines’ guidelines and involves no deception. As the search engine guidelines are not written as a series of rules or commandments, this is an important distinction to note. White hat SEO is not just about following guidelines but is about ensuring that the content a search engine indexes and subsequently ranks is the same content a user will see. White hat advice is generally summed up as creating content for users, not for search engines, and then making that content easily accessible to the online “spider” algorithms, rather than attempting to trick the algorithm from its intended purpose. White hat SEO is in many ways similar to web development that promotes accessibility, although the two are not identical.

An SEO technique is considered white hat if it conforms to the search engines’ guidelines and involves no deception. As the search engine guidelines are not written as a series of rules or commandments, this is an important distinction to note. White hat SEO is not just about following guidelines but is about ensuring that the content a search engine indexes and subsequently ranks is the same content a user will see. White hat advice is generally summed up as creating content for users, not for search engines, and then making that content easily accessible to the online “spider” algorithms, rather than attempting to trick the algorithm from its intended purpose. White hat SEO is in many ways similar to web development that promotes accessibility, although the two are not identical.

Black hat SEO

Black hat SEO attempts to improve rankings in ways that are disapproved of by the search engines, or involve deception. One black hat technique uses hidden text, either as text colored similar to the background, in an invisible div, or positioned off screen. Another method gives a different page depending on whether the page is being requested by a human visitor or a search engine, a technique known as cloaking. Another category sometimes used is grey hat SEO. This is in between black hat and white hat approaches, where the methods employed avoid the site being penalized but do not act in producing the best content for users. Grey hat SEO is entirely focused on improving search engine rankings.

Black hat SEO attempts to improve rankings in ways that are disapproved of by the search engines, or involve deception. One black hat technique uses hidden text, either as text colored similar to the background, in an invisible div, or positioned off screen. Another method gives a different page depending on whether the page is being requested by a human visitor or a search engine, a technique known as cloaking. Another category sometimes used is grey hat SEO. This is in between black hat and white hat approaches, where the methods employed avoid the site being penalized but do not act in producing the best content for users. Grey hat SEO is entirely focused on improving search engine rankings.

Black or Grey hat methods

Search engines may penalize sites they discover using black or grey hat methods, either by reducing their rankings or eliminating their listings from their databases altogether. Such penalties can be applied either automatically by the search engines’ algorithms, or by a manual site review. One example was the February 2006 Google removal of both BMW Germany and Ricoh Germany for use of deceptive practices. Both companies, however, quickly apologized, fixed the offending pages, and were restored to Google’s search engine results page.

Search engines may penalize sites they discover using black or grey hat methods, either by reducing their rankings or eliminating their listings from their databases altogether. Such penalties can be applied either automatically by the search engines’ algorithms, or by a manual site review. One example was the February 2006 Google removal of both BMW Germany and Ricoh Germany for use of deceptive practices. Both companies, however, quickly apologized, fixed the offending pages, and were restored to Google’s search engine results page.

Not Always An Appropriate Strategy

SEO is not an appropriate strategy for every website, and other Internet marketing strategies can be more effective, such as paid advertising through pay per click (PPC) campaigns, depending on the site operator’s goals. Search engine marketing (SEM) is the practice of designing, running and optimizing search engine ad campaigns. Its difference from SEO is most simply depicted as the difference between paid and unpaid priority ranking in search results. SEM focuses on prominence more so than relevance; website developers should regard SEM with the utmost importance with consideration to visibility as most navigate to the primary listings of their search.

SEO is not an appropriate strategy for every website, and other Internet marketing strategies can be more effective, such as paid advertising through pay per click (PPC) campaigns, depending on the site operator’s goals. Search engine marketing (SEM) is the practice of designing, running and optimizing search engine ad campaigns. Its difference from SEO is most simply depicted as the difference between paid and unpaid priority ranking in search results. SEM focuses on prominence more so than relevance; website developers should regard SEM with the utmost importance with consideration to visibility as most navigate to the primary listings of their search.

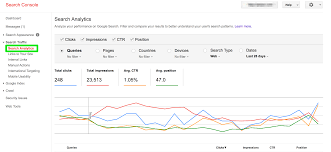

Google Search Console

A successful Internet marketing campaign may also depend upon building high quality web pages to engage and persuade internet users, setting up analytics programs to enable site owners to measure results, and improving a site’s conversion rate. In November 2015, Google released a full 160 page version of its Search Quality Rating Guidelines to the public, which revealed a shift in their focus towards “usefulness” and mobile search. In recent years the mobile market has exploded, overtaking the use of desktops, as shown in by Stat Counter in October 2016 where they analyzed 2.5 million websites and found that 51.3% of the pages were loaded by a mobile device. Google has been one of the companies that are utilizing the popularity of mobile usage by encouraging websites to use their Google Search Console, the Mobile-Friendly Test, which allows companies to measure up their website to the search engine results and determine how user-friendly their websites are.

A successful Internet marketing campaign may also depend upon building high quality web pages to engage and persuade internet users, setting up analytics programs to enable site owners to measure results, and improving a site’s conversion rate. In November 2015, Google released a full 160 page version of its Search Quality Rating Guidelines to the public, which revealed a shift in their focus towards “usefulness” and mobile search. In recent years the mobile market has exploded, overtaking the use of desktops, as shown in by Stat Counter in October 2016 where they analyzed 2.5 million websites and found that 51.3% of the pages were loaded by a mobile device. Google has been one of the companies that are utilizing the popularity of mobile usage by encouraging websites to use their Google Search Console, the Mobile-Friendly Test, which allows companies to measure up their website to the search engine results and determine how user-friendly their websites are.

User Web Accessibility

SEO may generate an adequate return on investment. However, search engines are not paid for organic search traffic, their algorithms change, and there are no guarantees of continued referrals. Due to this lack of guarantee and the uncertainty, a business that relies heavily on search engine traffic can suffer major losses if the search engines stop sending visitors. Search engines can change their algorithms, impacting a website’s search engine ranking, possibly resulting in a serious loss of traffic. According to Google’s CEO, Eric Schmidt, in 2010, Google made over 500 algorithm changes – almost 1.5 per day. It is considered a wise business practice for website operators to liberate themselves from dependence on search engine traffic. In addition to accessibility in terms of web crawlers (addressed above), user web accessibility has become increasingly important for SEO.

SEO may generate an adequate return on investment. However, search engines are not paid for organic search traffic, their algorithms change, and there are no guarantees of continued referrals. Due to this lack of guarantee and the uncertainty, a business that relies heavily on search engine traffic can suffer major losses if the search engines stop sending visitors. Search engines can change their algorithms, impacting a website’s search engine ranking, possibly resulting in a serious loss of traffic. According to Google’s CEO, Eric Schmidt, in 2010, Google made over 500 algorithm changes – almost 1.5 per day. It is considered a wise business practice for website operators to liberate themselves from dependence on search engine traffic. In addition to accessibility in terms of web crawlers (addressed above), user web accessibility has become increasingly important for SEO.

Vary from Market to Market

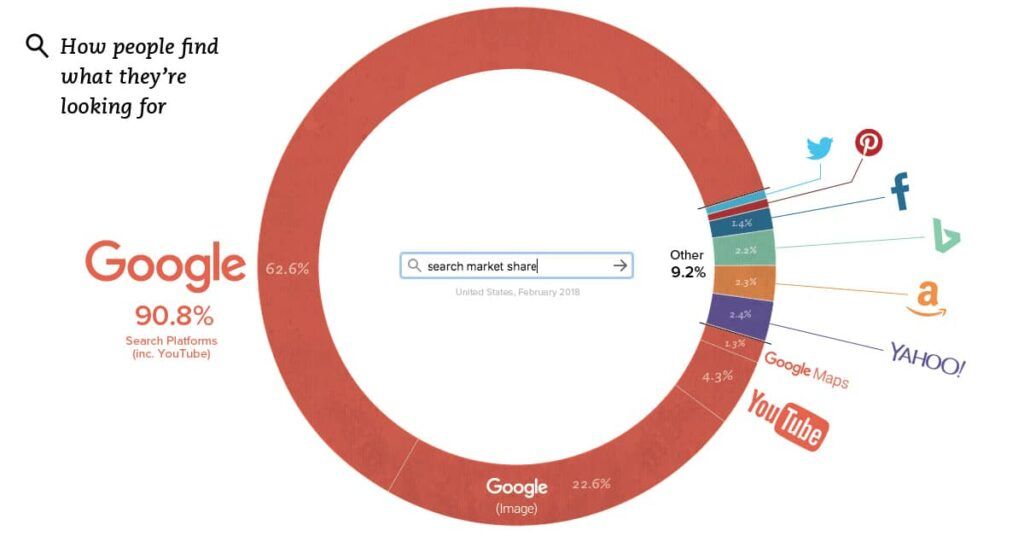

Optimization techniques are highly tuned to the dominant search engines in the target market. The search engines’ market shares vary from market to market, as does competition. In 2003, Danny Sullivan stated that Google represented about 75% of all searches. In markets outside the United States, Google’s share is often larger, and Google remains the dominant search engine worldwide as of 2007. As of 2006, Google had an 85–90% market share in Germany. While there were hundreds of SEO firms in the US at that time, there were only about five in Germany. As of June 2008, the market share of Google in the UK was close to 90% according to Hitwise. That market share is achieved in a number of countries.

Optimization techniques are highly tuned to the dominant search engines in the target market. The search engines’ market shares vary from market to market, as does competition. In 2003, Danny Sullivan stated that Google represented about 75% of all searches. In markets outside the United States, Google’s share is often larger, and Google remains the dominant search engine worldwide as of 2007. As of 2006, Google had an 85–90% market share in Germany. While there were hundreds of SEO firms in the US at that time, there were only about five in Germany. As of June 2008, the market share of Google in the UK was close to 90% according to Hitwise. That market share is achieved in a number of countries.

As of 2009, there are only a few large markets where Google is not the leading search engine. In most cases, when Google is not leading in a given market, it is lagging behind a local player. The most notable example markets are China, Japan, South Korea, Russia and the Czech Republic where respectively Baidu, Yahoo! Japan, Naver, Yandex and Seznam are market leaders.

Successful search optimization for international markets may require professional translation of web pages, registration of a domain name with a top level domain in the target market, and web hosting that provides a local IP address. Otherwise, the fundamental elements of search optimization are essentially the same, regardless of language.

I hope that you have really enjoyed this post,

Please Leave All Comments in the Comment Box Below ↓

Awesome Jerry,

This is a very well structured and informative article about Search Engine Optimization. It all began with the role it plays. I haven’t heard many of the points you mentioned. It is my first time to see the difference between Black Hat SEO and White Hat SEO. I didn’t know the difference between them beside the keyword usage and the website setup.

Thank you for the information and keep up with the great work!

Juma

Hello,

Thank you for considering this a very interesting article by calling it Awesome, Informative, and for announcing it to be information you have never heard of before. It is my pleasure to create an eye opener of information to be available to you for your future awareness of Methods of Getting Indexed.

You are most certainly welcome for the sharing of this helpful information. Thank you again for taking the time to read and comment on this article.

Sincere Regards To You My Friend!

Very interesting, Detailed, and informative article about SEO.

It is an eye opener of information to be available for everyone to understand Methods of Getting Indexed. I have vaguely heard many of the points you mentioned. I am a little bit aware about the difference between Black Hat SEO and White Hat SEO.

I am grateful that you have written such a much needed article.

Regards,

Rajesh

Hello,

Thanks a lot for your comment. When starting this site I wanted to write about things that I want to see more articles about in the world around me.

It takes work but I plan not to publish anything on here that I’m not willing to go through myself. I most definitely agree that there may be different outcomes for each person, and of course, there may be many similarities.

It would definitely help with spreading this message, if people would share these types of articles on their own social media platforms.

Thank you again for your comment.

Have an Abundantly Blessed Day!

Hello Jerry,

I am a newbie in affiliate marketing, and my 2 websites have just been set up.

Thank you so much for making it simple for us, newbies, to understand how to get indexed. You made it easier for me to gain more knowledge on search engines, and many other things that have opened my mind.

I have a question here – Is it possible to have both SEO and SEM in my website?

This may seem a silly or stupid question, but I’m raising it anyway. You’ve been so patient to write an awesome, informative article such as this one. So I believe you will extend more of your patience and generosity.

Thank you very much and God bless you more,

Chuna

Good day,

SEO is increasing the amount of website visitors by getting the site to appear high on results returned by a search engine.

SEM is considered internet marketing that increases a site’s visibility through organic search engines results and advertising.

Not only is it possible but, SEM includes SEO as well as other search marketing tactics that rely on it to operate at it’s maximum capacity.

Have an Abundantly Blessed Day!

I usually request for my updated pages or new pages on my site to get indexed by going to Google Search Console and by first testing it live to make sure that Google can properly see the page, and then I request indexing for that page.

I know this depends on your crawl budget but this is usually done within a few hours normally but typically has always been indexed or re-indexed by the next day.

Greetings,

Thank you for reading and commenting on Methods of Getting Indexed.

Favor Filled Blessings To You!

Thank you Jerry for sharing this article.

You’ve shared some very vital information here that I’m sure will be especially useful to a lot of people who are interested in increasing their website traffic.

Indexing sounds like a great way to grow your website but I’m wondering how it differs from search engine optimization and if you could explain that for me.

Welcome, my friend,

Crawling and indexing are two distinct things and this is commonly misunderstood in the SEO industry. Crawling means that Google-bots look at all the content/code on the page and analyzes it. Indexing means that the page is eligible to show up in Google’s search results.

Abundant Regards To You My Friend!

Your post is really self explanatory, and reading it really makes me understand how easy it can be for me to also get indexed too.

This is something I am willing to give a try although I just started my website. Your post is great for a person like me who understand the importance of getting indexed by the search engines.

Good day, it pleases me to reply to your comment,

Thank you for your feedback. I’m happy to hear you enjoyed the Methods of Getting Indexed.

Brighter Days To You My Friend!

Great content which was very informative and easy to follow.

There were topics I had no knowledge of and was very engaged throughout. Plenty of visual information on the web-page and the author wrote with great authority of subjects he clearly had experience of. In my opinion, its hard to find genuine information regarding SEO’s and this page had a personal touch that was very believable.

Well written!

Hello,

You sound like a pro while describing these products, and you are most certainly welcome.

Have A Blessing Filled Day!